From The Department of Mechanical Engineering

In

At

The Massachusetts Institute of Technology

5.21.24

Sonny Oram | Edgerton Center

Audrey Chen ’24 landed an internship at NASA before she was old enough to drive. Here’s her secret to success.

Audrey Chen (left) and Jared Byars test the durability of their boat in the Charles River. Photo: Jessica Lam

Audrey Chen with the Arcturus autonomous boat team at the Edgerton Center 2023 Showcase. Photo: Jaypix Belmer

Audrey Chen ’24 lives by the philosophy that “a lot of opportunities only present themselves if you ask for them.” This approach has served her well, from becoming a NASA intern at 15 to running MIT’s autonomous boat team Arcturus to entering a leadership position at 3D printing technology company Formlabs right out of undergrad.

Growing up in Los Angeles, Chen showed a strong aptitude and passion for engineering at a young age and skipped several grades in math. In her first year of high school, she saw a posting about the Lab Space Academy at NASA’s Jet Propulsion Lab. Though the program was for juniors and seniors, she inquired if they would make an exception for her and they agreed. By her junior year she was helping run the program as deputy.

But Chen didn’t stop there: She had dreams of interning at NASA. She asked her mentor and became a drone air traffic control researcher at NASA at 15. “I was not old enough to drive,” Chen says. “High school would end, the bell would ring, and I would put on my backpack and I would run down the street to JPL. Can you imagine you’re the security guard at the gate of the Jet Propulsion Laboratory and a kid shows up for work?”

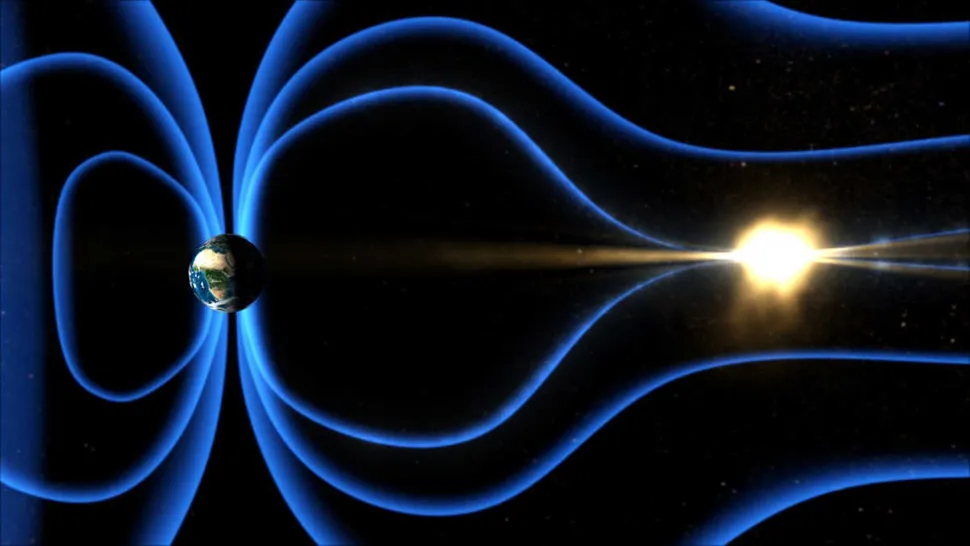

Chen worked on the Orbiting Arid Subsurfaces and Ice Sheet Sounder (OASIS) project, whose goal is to find and examine freshwater aquifers and ice sheets. “It was very early in the mission, so I was doing system and objective definition,” Chen says.

Next stop: MIT

After graduating high school, Chen ventured across the country to explore her eclectic interests at MIT. When she wasn’t fulfilling the requirements for her mechanical engineering degree, she could be found leather crafting, glass blowing, or table welding in one of MIT’s makerspaces, documenting MIT student life with her camera (garnering the acclimation The Eyes of MIT by MIT Admissions), working as a researcher sampling deep-sea sediment, or notably, running the award-winning autonomous boat team Arcturus.

“Arcturus has been the highlight of my MIT career,” Chen says. She founded the team at MIT Sea Grant in 2022 along with a group of equally impassioned students who elected Chen as captain.

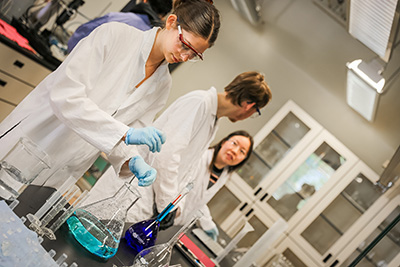

“I didn’t have any background in marine autonomy, so we pushed very hard to institute trainings and have lots of workshops so that they would feel comfortable coming in and contributing as soon as possible,” she recalls. Seeking additional funding and support, the team found a home at the MIT Edgerton Center.

Launching Arcturus

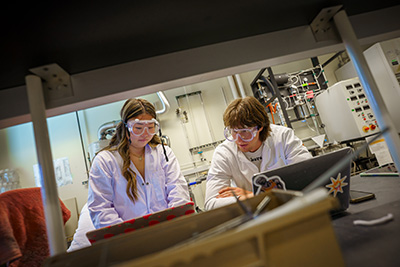

“Whenever I think about how Arcturus started and how it somehow still continues, I think it’s a miracle,” Chen says. “Our very first year, there were five of us at the Roboboat competition, and if any individual one of us had not decided to join the team, we either would not have a boat, we would not have electronics, we would not have code to run the boat, or we wouldn’t have funding to run the team.”

Chen’s first year as captain was a tremendous amount of work because the team was so small. In addition to managing the team and assuring they met their goals on time, Chen also acted as the team’s business lead, treasurer, media lead, and photographer. “I was juggling a lot of things. Since then, those roles have further split amongst more people within the team,” she says.

Recruiting isn’t easy for an autonomous boat team, as many students don’t get marine robotics experience in high school. To keep their recruitment pool wide, Chen didn’t expect students to have background in autonomy or in marine systems. “Creating an environment that’s welcoming and friendly and supportive of people’s learning is crucial, because otherwise you won’t have a team. We’ve really pushed hard to recruit from a large body of people. We make sure to emphasize that we’re open to all majors, all years. As an industry, marine robotics, like most engineering, is very male-dominated. We work hard to recruit people of all genders and ethnicities.”

With Chen’s skillful recruiting, Arcturus increased from five to 74 members in 2024. Arcturus flourished under Chen’s leadership, winning First Place Design Overall at the Roboboat competition in 2023.

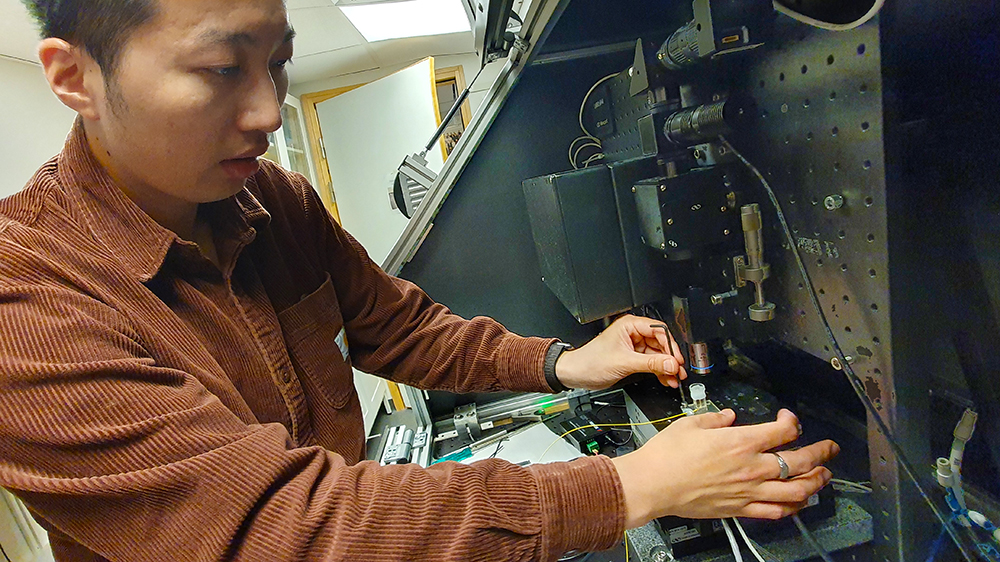

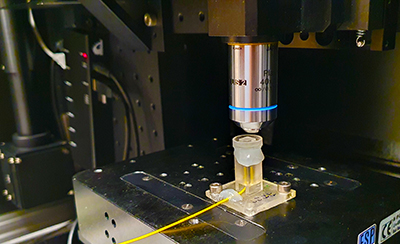

The challenges with autonomous boats

Chen was drawn to autonomous boats because the field is so full of potential. “You leave a robot on land and you turn it off, it doesn’t move by itself, versus you put it in a body of water and you don’t do anything, then it still moves because of the currents. It needs to be constantly taking in that input and trying to localize where it is,” Chen says.

Chen sees a lot of potential in the marine biotics industry to gather crucial data about our environment. “Autonomy in the marine space is not as well researched as land autonomy is. There’s immense potential for marine autonomy to benefit the world. You think about mapping ocean topology or looking for endangered species or habitat protection or surveying bleached coral reefs. As a vehicle, you have more flexibility to move around versus a buoy. That gives you the ability to take water and sediment samples across a wider spread of area. And by making it autonomous, you eliminate high labor costs, so the price per sample for a researcher would go down. These are different ways in which autonomy has potential to benefit the research sphere, but also, more broadly, the world.”

Chen graduated early this past February and passed Arcturus on to captains and rising juniors Ami Shi and Karen Guo. “They’re rock stars. The team is in good hands,” Chen says.

Becoming a project manager at Formlabs

Chen graduated a semester early and accepted a project manager position at Formlabs. She brings many lessons from MIT to her work. “The biggest thing that I’ve learned is that I don’t need to know everything. Part of being successful is knowing what you don’t know. So I’m always aware that in every Arcturus meeting, and probably every technical meeting that I’ll be in at Formlabs, that I will not be the smartest person in the room. And that’s fine. I don’t need to be the smartest person ever because that’s not my job. My job is to bring these projects together and know enough about all the systems to integrate them.”

Chen is thrilled to stay near MIT after graduation, allowing her the opportunity to visit her friends and continue mentoring Arcturus. Upon announcing her new job, she remarked, “To my friends at MIT, I’ll be just down the street, so you won’t be able to get rid of me that easily!”

See the full article here .

Comments are invited and will be appreciated, especially if the reader finds any errors which I can correct.

five-ways-keep-your-child-safe-school-shootings

Please help promote STEM in your local schools.

The Department of Mechanical Engineering, commonly referred to as “Mech E,” was designated Course I of the six courses offered when classes began at the Massachusetts Institute of Technology in 1865. The course focused on the study of existing machinery and the principles behind their construction and operation.

In 1872 the department became Course II and Civil Engineering became Course I. That same year Assistant Professor Channing Whitaker, MIT class of 1869, began to teach mechanical engineering and redirected the emphasis of the course towards empirical studies. Whitaker proposed the use of in-house teaching laboratories and increased excursions to industrial and civil sites. In 1874 mechanical engineering’s first laboratory was built for direct application of current methodology to engineering problems. The education and research program of the new lab was applied in its approach and focused primarily on the steam engine.

Mechanical engineering became a formal department in 1883. The following specializations were offered: marine engineering (offered until 1913); locomotive engineering (offered until 1918); mill engineering, which eventually became textile engineering; and naval architecture, which became a separate department in 1894. In 1899 the option of heat and ventilation (offered until 1913) was introduced and in 1908, steam turbine engineering (offered until 1918).

Edward Miller, who became head of the department in 1911, designed the facilities for the department when the Institute moved from Boston to the “New Technology” in Cambridge, Mass., in 1916. New options during this period included engine design (1913-1925), automotive engineering (1923-1949), ordnance (1923-1924), and refrigeration, which became refrigeration and air conditioning.

The appointment of Jerome C. Hunsaker as department head in 1933 marked a major change in the direction of the department as he incorporated the aeronautics curriculum into mechanical engineering, and altered the traditional course in hydraulics into a study of the mechanics of fluids in general. He also modernized the laboratories.

The work that was carried out in the department between 1930 and the early 1960s served to codify many basic principles in the field of mechanical engineering. Seminal publications in dynamics, heat transfer, mechanics of materials, and thermodynamics were produced, and by the mid 1960s the department was renowned for pioneering the development of system dynamics and control and man-machine systems as fields of study within the profession.

In 1965 Ascher Shapiro became head of the department and furthered the shift towards applied mechanical engineering as the focus of research moved away from military applications to quality of life applications such as the environment and biomedical engineering. By the mid 1970s, continuing to the present (as of 1995), research was concentrated within four major programs: biomedical engineering; energy and environment; human services, including transportation; and manufacturing, materials, and materials processing.

The Department of Ocean Engineering merged with the Department of Mechanical Engineering effective January 1, 2005, and the merged department is known as the Department of Mechanical Engineering. Within the Department of Mechanical Engineering an undergraduate specialization in ocean engineering and graduate programs in Naval Architecture and Construction (previously XIII-A) and the Joint MIT-Woods Hole Oceanographic Institution Program (previously XIII-W) will continue.

The MIT School of Engineering is one of the five schools of the Massachusetts Institute of Technology, located in Cambridge, Massachusetts. The School of Engineering has eight academic departments and two interdisciplinary institutes. The School grants SB, MEng, SM, engineer’s degrees, and PhD or ScD degrees. The school is the largest at MIT as measured by undergraduate and graduate enrollments and faculty members.

Departments and initiatives:

Departments:

Aeronautics and Astronautics (Course 16)

Biological Engineering (Course 20)

Chemical Engineering (Course 10)

Civil and Environmental Engineering (Course 1)

Electrical Engineering and Computer Science (Course 6, joint department with MIT Schwarzman College of Computing)

Materials Science and Engineering (Course 3)

Mechanical Engineering (Course 2)

Nuclear Science and Engineering (Course 22)

Institutes:

Institute for Medical Engineering and Science

Health Sciences and Technology program (joint MIT-Harvard, “HST” in the course catalog)

(Departments and degree programs are commonly referred to by course catalog numbers on campus.)

Laboratories and research centers

Abdul Latif Jameel Water and Food Systems Lab

Center for Advanced Nuclear Energy Systems

Center for Computational Engineering

Center for Materials Science and Engineering

Center for Ocean Engineering

Center for Transportation and Logistics

Industrial Performance Center

Institute for Soldier Nanotechnologies

Koch Institute for Integrative Cancer Research

Laboratory for Information and Decision Systems

Laboratory for Manufacturing and Productivity

Materials Processing Center

Microsystems Technology Laboratories

MIT Lincoln Laboratory Beaver Works Center

Novartis-MIT Center for Continuous Manufacturing

Ocean Engineering Design Laboratory

Research Laboratory of Electronics

SMART Center

Sociotechnical Systems Research Center

Tata Center for Technology and Design

The Massachusetts Institute of Technology is a private land-grant research university in Cambridge, Massachusetts. The institute has an urban campus that extends more than a mile (1.6 km) alongside the Charles River. The institute also encompasses a number of major off-campus facilities such as the MIT Lincoln Laboratory , the MIT Bates Research and Engineering Center , and the Haystack Observatory , as well as affiliated laboratories such as the Broad Institute of MIT and Harvard and Whitehead Institute.

Founded in 1861 in response to the increasing industrialization of the United States, Massachusetts Institute of Technology adopted a European polytechnic university model and stressed laboratory instruction in applied science and engineering. It has since played a key role in the development of many aspects of modern science, engineering, mathematics, and technology, and is widely known for its innovation and academic strength. It is frequently regarded as one of the most prestigious universities in the world.

Nobel laureates, Turing Award winners, and Fields Medalists have been affiliated with MIT as alumni, faculty members, or researchers. In addition, National Medal of Science recipients, National Medals of Technology and Innovation recipients, MacArthur Fellows, Marshall Scholars, Mitchell Scholars, Schwarzman Scholars, astronauts, and Chief Scientists of the U.S. Air Force have been affiliated with The Massachusetts Institute of Technology. The university also has a strong entrepreneurial culture and MIT alumni have founded or co-founded many notable companies. Massachusetts Institute of Technology is a member of the Association of American Universities.

Foundation and vision

In 1859, a proposal was submitted to the Massachusetts General Court to use newly filled lands in Back Bay, Boston for a “Conservatory of Art and Science”, but the proposal failed. A charter for the incorporation of the Massachusetts Institute of Technology, proposed by William Barton Rogers, was signed by John Albion Andrew, the governor of Massachusetts, on April 10, 1861.

Rogers, a professor from the University of Virginia , wanted to establish an institution to address rapid scientific and technological advances. He did not wish to found a professional school, but a combination with elements of both professional and liberal education, proposing that:

“The true and only practicable object of a polytechnic school is, as I conceive, the teaching, not of the minute details and manipulations of the arts, which can be done only in the workshop, but the inculcation of those scientific principles which form the basis and explanation of them, and along with this, a full and methodical review of all their leading processes and operations in connection with physical laws.”

The Rogers Plan reflected the German research university model, emphasizing an independent faculty engaged in research, as well as instruction oriented around seminars and laboratories.

Early developments

Two days after The Massachusetts Institute of Technology was chartered, the first battle of the Civil War broke out. After a long delay through the war years, MIT’s first classes were held in the Mercantile Building in Boston in 1865. The new institute was founded as part of the Morrill Land-Grant Colleges Act to fund institutions “to promote the liberal and practical education of the industrial classes” and was a land-grant school. In 1863 under the same act, the Commonwealth of Massachusetts founded the Massachusetts Agricultural College, which developed as the University of Massachusetts Amherst ). In 1866, the proceeds from land sales went toward new buildings in the Back Bay.

The Massachusetts Institute of Technology was informally called “Boston Tech”. The institute adopted the European polytechnic university model and emphasized laboratory instruction from an early date. Despite chronic financial problems, the institute saw growth in the last two decades of the 19th century under President Francis Amasa Walker. Programs in electrical, chemical, marine, and sanitary engineering were introduced, new buildings were built, and the size of the student body increased to more than one thousand.

The curriculum drifted to a vocational emphasis, with less focus on theoretical science. The fledgling school still suffered from chronic financial shortages which diverted the attention of the MIT leadership. During these “Boston Tech” years, Massachusetts Institute of Technology faculty and alumni rebuffed Harvard University president (and former MIT faculty) Charles W. Eliot’s repeated attempts to merge MIT with Harvard College’s Lawrence Scientific School. There would be at least six attempts to absorb MIT into Harvard. In its cramped Back Bay location, MIT could not afford to expand its overcrowded facilities, driving a desperate search for a new campus and funding. Eventually, the MIT Corporation approved a formal agreement to merge with Harvard, over the vehement objections of MIT faculty, students, and alumni. However, a 1917 decision by the Massachusetts Supreme Judicial Court effectively put an end to the merger scheme.

In 1916, The Massachusetts Institute of Technology administration and the MIT charter crossed the Charles River on the ceremonial barge Bucentaur built for the occasion, to signify MIT’s move to a spacious new campus largely consisting of filled land on a one-mile-long (1.6 km) tract along the Cambridge side of the Charles River. The neoclassical “New Technology” campus was designed by William W. Bosworth and had been funded largely by anonymous donations from a mysterious “Mr. Smith”, starting in 1912. In January 1920, the donor was revealed to be the industrialist George Eastman of Rochester, New York, who had invented methods of film production and processing, and founded Eastman Kodak. Between 1912 and 1920, Eastman donated $20 million ($236.6 million in 2015 dollars) in cash and Kodak stock to MIT.

Curricular reforms

In the 1930s, President Karl Taylor Compton and Vice-President (effectively Provost) Vannevar Bush emphasized the importance of pure sciences like physics and chemistry and reduced the vocational practice required in shops and drafting studios. The Compton reforms “renewed confidence in the ability of the Institute to develop leadership in science as well as in engineering”. Unlike Ivy League schools, Massachusetts Institute of Technology catered more to middle-class families, and depended more on tuition than on endowments or grants for its funding. The school was elected to the Association of American Universities in 1934.

Still, as late as 1949, the Lewis Committee lamented in its report on the state of education at The Massachusetts Institute of Technology that “the Institute is widely conceived as basically a vocational school”, a “partly unjustified” perception the committee sought to change. The report comprehensively reviewed the undergraduate curriculum, recommended offering a broader education, and warned against letting engineering and government-sponsored research detract from the sciences and humanities. The School of Humanities, Arts, and Social Sciences and the MIT Sloan School of Management were formed in 1950 to compete with the powerful Schools of Science and Engineering. Previously marginalized faculties in the areas of economics, management, political science, and linguistics emerged into cohesive and assertive departments by attracting respected professors and launching competitive graduate programs. The School of Humanities, Arts, and Social Sciences continued to develop under the successive terms of the more humanistically oriented presidents Howard W. Johnson and Jerome Wiesner between 1966 and 1980.

The Massachusetts Institute of Technology‘s involvement in military science surged during World War II. In 1941, Vannevar Bush was appointed head of the federal Office of Scientific Research and Development and directed funding to only a select group of universities, including MIT. Engineers and scientists from across the country gathered at Massachusetts Institute of Technology ‘s Radiation Laboratory, established in 1940 to assist the British military in developing microwave radar. The work done there significantly affected both the war and subsequent research in the area. Other defense projects included gyroscope-based and other complex control systems for gunsight, bombsight, and inertial navigation under Charles Stark Draper’s Instrumentation Laboratory; the development of a digital computer for flight simulations under Project Whirlwind; and high-speed and high-altitude photography under Harold Edgerton. By the end of the war, The Massachusetts Institute of Technology became the nation’s largest wartime R&D contractor (attracting some criticism of Bush), employing nearly 4000 in the Radiation Laboratory alone and receiving in excess of $100 million ($1.2 billion in 2015 dollars) before 1946. Work on defense projects continued even after then. Post-war government-sponsored research at MIT included SAGE and guidance systems for ballistic missiles and Project Apollo.

These activities affected The Massachusetts Institute of Technology profoundly. A 1949 report noted the lack of “any great slackening in the pace of life at the Institute” to match the return to peacetime, remembering the “academic tranquility of the prewar years”, though acknowledging the significant contributions of military research to the increased emphasis on graduate education and rapid growth of personnel and facilities. The faculty doubled and the graduate student body quintupled during the terms of Karl Taylor Compton, president of The Massachusetts Institute of Technology between 1930 and 1948; James Rhyne Killian, president from 1948 to 1957; and Julius Adams Stratton, chancellor from 1952 to 1957, whose institution-building strategies shaped the expanding university. By the 1950s, The Massachusetts Institute of Technology no longer simply benefited the industries with which it had worked for three decades, and it had developed closer working relationships with new patrons, philanthropic foundations and the federal government.

In late 1960s and early 1970s, student and faculty activists protested against the Vietnam War and The Massachusetts Institute of Technology ‘s defense research. In this period Massachusetts Institute of Technology’s various departments were researching helicopters, smart bombs and counterinsurgency techniques for the war in Vietnam as well as guidance systems for nuclear missiles. The Union of Concerned Scientists was founded on March 4, 1969 during a meeting of faculty members and students seeking to shift the emphasis on military research toward environmental and social problems. The Massachusetts Institute of Technology ultimately divested itself from the Instrumentation Laboratory and moved all classified research off-campus to the MIT Lincoln Laboratory facility in 1973 in response to the protests. The student body, faculty, and administration remained comparatively unpolarized during what was a tumultuous time for many other universities. Johnson was seen to be highly successful in leading his institution to “greater strength and unity” after these times of turmoil. However, six Massachusetts Institute of Technology students were sentenced to prison terms at this time and some former student leaders, such as Michael Albert and George Katsiaficas, are still indignant about MIT’s role in military research and its suppression of these protests. (Richard Leacock’s film, November Actions, records some of these tumultuous events.)

In the 1980s, there was more controversy at The Massachusetts Institute of Technology over its involvement in SDI (space weaponry) and CBW (chemical and biological warfare) research. More recently, The Massachusetts Institute of Technology’s research for the military has included work on robots, drones and ‘battle suits’.

Recent history

The Massachusetts Institute of Technology has kept pace with and helped to advance the digital age. In addition to developing the predecessors to modern computing and networking technologies, students, staff, and faculty members at Project MAC, the Artificial Intelligence Laboratory, and the Tech Model Railroad Club wrote some of the earliest interactive computer video games like Spacewar! and created much of modern hacker slang and culture. Several major computer-related organizations have originated at MIT since the 1980s: Richard Stallman’s GNU Project and the subsequent Free Software Foundation were founded in the mid-1980s at the AI Lab; the MIT Media Lab was founded in 1985 by Nicholas Negroponte and Jerome Wiesner to promote research into novel uses of computer technology; the World Wide Web Consortium standards organization was founded at the Laboratory for Computer Science in 1994 by Tim Berners-Lee; the MIT OpenCourseWare project has made course materials for over 2,000 Massachusetts Institute of Technology classes available online free of charge since 2002; and the One Laptop per Child initiative to expand computer education and connectivity to children worldwide was launched in 2005.

The Massachusetts Institute of Technology was named a sea-grant college in 1976 to support its programs in oceanography and marine sciences and was named a space-grant college in 1989 to support its aeronautics and astronautics programs. Despite diminishing government financial support over the past quarter century, MIT launched several successful development campaigns to significantly expand the campus: new dormitories and athletics buildings on west campus; the Tang Center for Management Education; several buildings in the northeast corner of campus supporting research into biology, brain and cognitive sciences, genomics, biotechnology, and cancer research; and a number of new “backlot” buildings on Vassar Street including the Stata Center. Construction on campus in the 2000s included expansions of the Media Lab, the Sloan School’s eastern campus, and graduate residences in the northwest. In 2006, President Hockfield launched the MIT Energy Research Council to investigate the interdisciplinary challenges posed by increasing global energy consumption.

In 2001, inspired by the open source and open access movements, The Massachusetts Institute of Technology launched “OpenCourseWare” to make the lecture notes, problem sets, syllabi, exams, and lectures from the great majority of its courses available online for no charge, though without any formal accreditation for coursework completed. While the cost of supporting and hosting the project is high, OCW expanded in 2005 to include other universities as a part of the OpenCourseWare Consortium, which currently includes more than 250 academic institutions with content available in at least six languages. In 2011, The Massachusetts Institute of Technology announced it would offer formal certification (but not credits or degrees) to online participants completing coursework in its “MITx” program, for a modest fee. The “edX” online platform supporting MITx was initially developed in partnership with Harvard and its analogous “Harvardx” initiative. The courseware platform is open source, and other universities have already joined and added their own course content. In March 2009 the Massachusetts Institute of Technology faculty adopted an open-access policy to make its scholarship publicly accessible online.

The Massachusetts Institute of Technology has its own police force. Three days after the Boston Marathon bombing of April 2013, MIT Police patrol officer Sean Collier was fatally shot by the suspects Dzhokhar and Tamerlan Tsarnaev, setting off a violent manhunt that shut down the campus and much of the Boston metropolitan area for a day. One week later, Collier’s memorial service was attended by more than 10,000 people, in a ceremony hosted by the Massachusetts Institute of Technology community with thousands of police officers from the New England region and Canada. On November 25, 2013, The Massachusetts Institute of Technology announced the creation of the Collier Medal, to be awarded annually to “an individual or group that embodies the character and qualities that Officer Collier exhibited as a member of The Massachusetts Institute of Technology community and in all aspects of his life”. The announcement further stated that “Future recipients of the award will include those whose contributions exceed the boundaries of their profession, those who have contributed to building bridges across the community, and those who consistently and selflessly perform acts of kindness”.

In September 2017, the school announced the creation of an artificial intelligence research lab called the MIT-IBM Watson AI Lab. IBM will spend $240 million over the next decade, and the lab will be staffed by MIT and IBM scientists. In October 2018 MIT announced that it would open a new Schwarzman College of Computing dedicated to the study of artificial intelligence, named after lead donor and The Blackstone Group CEO Stephen Schwarzman. The focus of the new college is to study not just AI, but interdisciplinary AI education, and how AI can be used in fields as diverse as history and biology. The cost of buildings and new faculty for the new college is expected to be $1 billion upon completion.

The Caltech/MIT Advanced aLIGO was designed and constructed by a team of scientists from California Institute of Technology , Massachusetts Institute of Technology, and industrial contractors, and funded by the National Science Foundation .

It was designed to open the field of gravitational-wave astronomy through the detection of gravitational waves predicted by general relativity. Gravitational waves were detected for the first time by the LIGO detector in 2015. For contributions to the LIGO detector and the observation of gravitational waves, two Caltech physicists, Kip Thorne and Barry Barish, and Massachusetts Institute of Technology physicist Rainer Weiss won the Nobel Prize in physics in 2017. Weiss, who is also a Massachusetts Institute of Technology graduate, designed the laser interferometric technique, which served as the essential blueprint for the LIGO.

The mission of The Massachusetts Institute of Technology is to advance knowledge and educate students in science, technology, and other areas of scholarship that will best serve the nation and the world in the twenty-first century. We seek to develop in each member of The Massachusetts Institute of Technology community the ability and passion to work wisely, creatively, and effectively for the betterment of humankind.

.png)